- Authors

- Name

- Introduction

- Core Concepts

- Python Instrumentation

- Java/Spring Boot Instrumentation

- OTel Collector Configuration

- Sampling Strategies

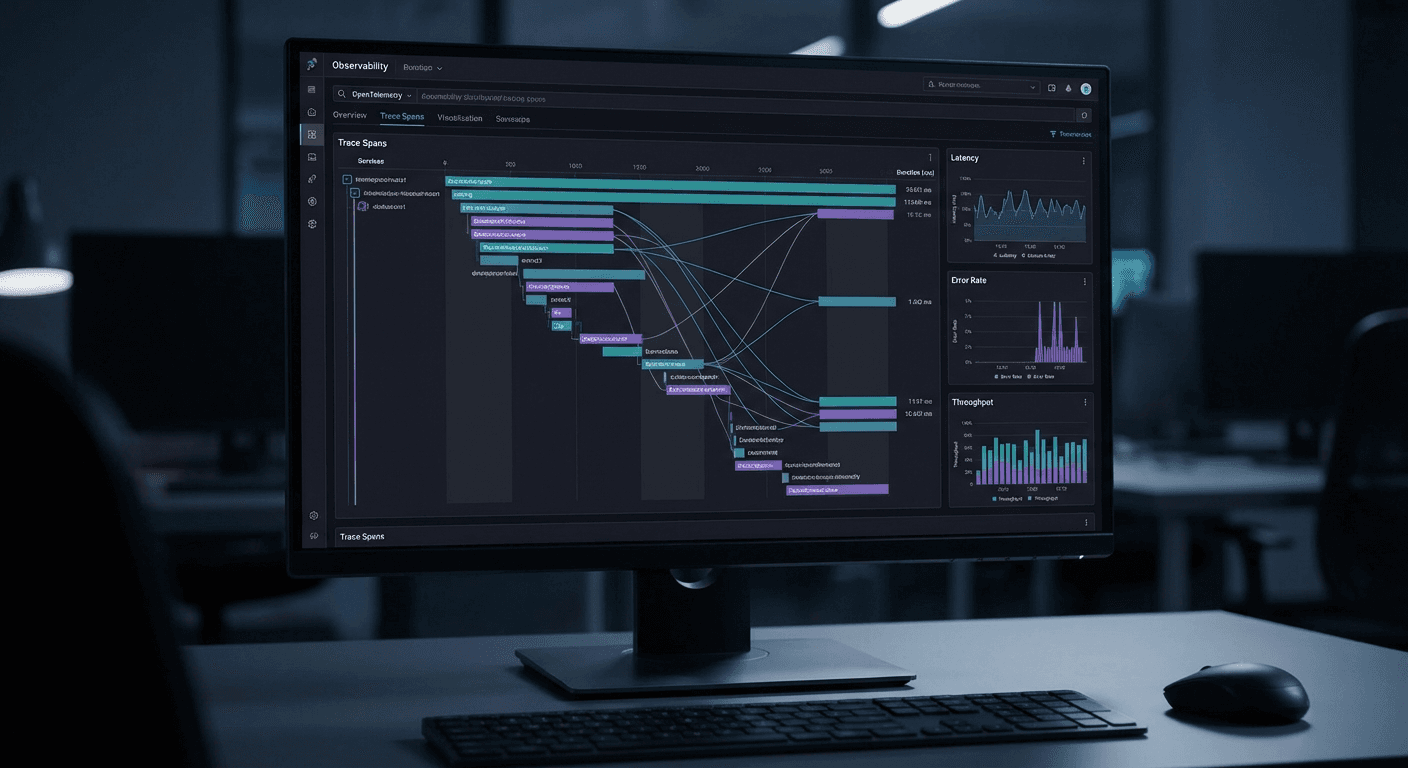

- Using Jaeger UI

- Best Practices

- Quiz

- Conclusion

- References

Introduction

In microservice environments, a single user request passes through dozens of services. To identify where bottlenecks occur and which services are slow, distributed tracing is essential.

OpenTelemetry (OTel) is the CNCF Observability standard, a vendor-neutral framework that unifies Traces, Metrics, and Logs.

Core Concepts

Trace, Span, Context

Trace (the journey of an entire request):

├── Span A: API Gateway (50ms)

│ ├── Span B: Auth Service (10ms)

│ ├── Span C: Order Service (35ms)

│ │ ├── Span D: Database Query (15ms)

│ │ └── Span E: Payment Service (18ms)

│ │ └── Span F: Bank API Call (12ms)

│ └── Span G: Notification Service (5ms, async)

# Structure of a Span

{

"traceId": "abc123def456...", # Unique Trace ID (128-bit)

"spanId": "span789...", # Unique Span ID (64-bit)

"parentSpanId": "parent456...", # Parent Span ID

"name": "POST /api/orders", # Span name

"kind": "SERVER", # CLIENT, SERVER, PRODUCER, CONSUMER, INTERNAL

"startTime": "2026-03-03T12:00:00Z",

"endTime": "2026-03-03T12:00:00.050Z",

"status": {"code": "OK"},

"attributes": { # Metadata

"http.method": "POST",

"http.url": "/api/orders",

"http.status_code": 201,

"service.name": "order-service"

},

"events": [ # Events within the Span

{

"name": "order.validated",

"timestamp": "2026-03-03T12:00:00.010Z",

"attributes": {"order_id": "ORD-123"}

}

]

}

Context Propagation

Context propagation between services:

[Service A] [Service B]

│ │

│ traceparent: 00-abc123-span1-01

│ ─────────────────────────> │

│ │ (Creates child Span with same traceId)

│ │

│ │ traceparent: 00-abc123-span2-01

│ │ ──────────> [Service C]

HTTP Header:

traceparent: 00-{traceId}-{spanId}-{flags}

Example: traceparent: 00-abc123def456789-span12345678-01

Python Instrumentation

Auto-Instrumentation (Zero-Code)

# Install dependencies

pip install opentelemetry-distro opentelemetry-exporter-otlp

opentelemetry-bootstrap -a install # Install auto-instrumentation packages

# Run app with auto-instrumentation

opentelemetry-instrument \

--service_name order-service \

--traces_exporter otlp \

--metrics_exporter otlp \

--exporter_otlp_endpoint http://otel-collector:4317 \

python app.py

Manual Instrumentation (Fine-Grained Control)

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk.resources import Resource

from opentelemetry.semconv.resource import ResourceAttributes

# 1. Configure TracerProvider

resource = Resource.create({

ResourceAttributes.SERVICE_NAME: "order-service",

ResourceAttributes.SERVICE_VERSION: "1.2.0",

ResourceAttributes.DEPLOYMENT_ENVIRONMENT: "production",

})

provider = TracerProvider(resource=resource)

processor = BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://otel-collector:4317")

)

provider.add_span_processor(processor)

trace.set_tracer_provider(provider)

# 2. Create Tracer

tracer = trace.get_tracer("order-service", "1.2.0")

# 3. Create and use Spans

@tracer.start_as_current_span("create_order")

def create_order(order_data: dict) -> dict:

span = trace.get_current_span()

# Add attributes

span.set_attribute("order.customer_id", order_data["customer_id"])

span.set_attribute("order.total_amount", order_data["total"])

span.set_attribute("order.item_count", len(order_data["items"]))

# Add events

span.add_event("order.validation_started")

# Validation

validate_order(order_data)

span.add_event("order.validation_completed")

# Process payment (child Span created automatically)

payment_result = process_payment(order_data)

# Record result

span.set_attribute("order.id", payment_result["order_id"])

span.set_status(trace.StatusCode.OK)

return payment_result

@tracer.start_as_current_span("process_payment")

def process_payment(order_data: dict) -> dict:

span = trace.get_current_span()

span.set_attribute("payment.method", order_data.get("payment_method", "card"))

try:

result = payment_client.charge(order_data)

span.set_attribute("payment.transaction_id", result["txn_id"])

return result

except Exception as e:

span.set_status(trace.StatusCode.ERROR, str(e))

span.record_exception(e)

raise

FastAPI Integration

from fastapi import FastAPI, Request

from opentelemetry.instrumentation.fastapi import FastAPIInstrumentor

from opentelemetry.instrumentation.httpx import HTTPXClientInstrumentor

from opentelemetry.instrumentation.sqlalchemy import SQLAlchemyInstrumentor

app = FastAPI()

# Apply auto-instrumentation

FastAPIInstrumentor.instrument_app(app)

HTTPXClientInstrumentor().instrument() # External HTTP calls

SQLAlchemyInstrumentor().instrument(engine=db_engine) # DB queries

@app.post("/api/orders")

async def create_order(request: Request, order: OrderRequest):

# Add business context to current Span

span = trace.get_current_span()

span.set_attribute("order.customer_id", order.customer_id)

span.set_attribute("order.region", order.shipping_region)

result = await order_service.create(order)

return result

Java/Spring Boot Instrumentation

Spring Boot Auto-Configuration

<dependency>

<groupId>io.opentelemetry.instrumentation</groupId>

<artifactId>opentelemetry-spring-boot-starter</artifactId>

<version>2.11.0</version>

</dependency>

# application.yml

otel:

service:

name: order-service

exporter:

otlp:

endpoint: http://otel-collector:4317

traces:

sampler: parentbased_traceidratio

sampler.arg: '0.1' # 10% sampling (production)

@RestController

@RequiredArgsConstructor

public class OrderController {

private final Tracer tracer;

private final OrderService orderService;

@PostMapping("/api/orders")

public ResponseEntity<OrderResponse> createOrder(@RequestBody OrderRequest request) {

Span span = Span.current();

span.setAttribute("order.customer_id", request.getCustomerId());

span.setAttribute("order.total", request.getTotal().doubleValue());

OrderResponse response = orderService.create(request);

span.setAttribute("order.id", response.getOrderId());

return ResponseEntity.status(HttpStatus.CREATED).body(response);

}

}

// Manual Span creation

@Service

public class PaymentService {

@Autowired

private Tracer tracer;

public PaymentResult processPayment(Order order) {

Span span = tracer.spanBuilder("process_payment")

.setAttribute("payment.amount", order.getTotal().doubleValue())

.startSpan();

try (Scope scope = span.makeCurrent()) {

PaymentResult result = paymentGateway.charge(order);

span.setAttribute("payment.txn_id", result.getTransactionId());

return result;

} catch (Exception e) {

span.setStatus(StatusCode.ERROR, e.getMessage());

span.recordException(e);

throw e;

} finally {

span.end();

}

}

}

OTel Collector Configuration

# otel-collector-config.yaml

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

batch:

timeout: 5s

send_batch_size: 1024

send_batch_max_size: 2048

memory_limiter:

check_interval: 1s

limit_mib: 512

spike_limit_mib: 128

attributes:

actions:

- key: environment

value: production

action: upsert

tail_sampling:

decision_wait: 10s

policies:

- name: errors

type: status_code

status_code: { status_codes: [ERROR] }

- name: slow-traces

type: latency

latency: { threshold_ms: 1000 }

- name: probabilistic

type: probabilistic

probabilistic: { sampling_percentage: 10 }

exporters:

otlp/jaeger:

endpoint: jaeger:4317

tls:

insecure: true

otlp/tempo:

endpoint: tempo:4317

tls:

insecure: true

prometheus:

endpoint: 0.0.0.0:8889

service:

pipelines:

traces:

receivers: [otlp]

processors: [memory_limiter, batch, attributes, tail_sampling]

exporters: [otlp/jaeger]

metrics:

receivers: [otlp]

processors: [memory_limiter, batch]

exporters: [prometheus]

Kubernetes Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: otel-collector

spec:

replicas: 2

selector:

matchLabels:

app: otel-collector

template:

spec:

containers:

- name: collector

image: otel/opentelemetry-collector-contrib:0.96.0

args: ['--config=/conf/config.yaml']

ports:

- containerPort: 4317 # gRPC

- containerPort: 4318 # HTTP

- containerPort: 8889 # Prometheus metrics

resources:

requests:

cpu: 200m

memory: 256Mi

limits:

cpu: 1000m

memory: 512Mi

volumeMounts:

- name: config

mountPath: /conf

volumes:

- name: config

configMap:

name: otel-collector-config

---

apiVersion: v1

kind: Service

metadata:

name: otel-collector

spec:

selector:

app: otel-collector

ports:

- name: grpc

port: 4317

- name: http

port: 4318

Sampling Strategies

from opentelemetry.sdk.trace.sampling import (

TraceIdRatioBased,

ParentBasedTraceIdRatio,

ALWAYS_ON,

ALWAYS_OFF,

)

# Development: collect everything

dev_sampler = ALWAYS_ON

# Production: 10% sampling (follows parent Span decision)

prod_sampler = ParentBasedTraceIdRatio(0.1)

# Custom sampler: 100% for errors, 5% for normal

class SmartSampler:

def should_sample(self, context, trace_id, name, kind, attributes, links):

# Always collect error Spans

if attributes and attributes.get("error") == True:

return SamplingResult(Decision.RECORD_AND_SAMPLE)

# 5% for normal

if trace_id % 100 < 5:

return SamplingResult(Decision.RECORD_AND_SAMPLE)

return SamplingResult(Decision.DROP)

Using Jaeger UI

# Run Jaeger with Docker

docker run -d --name jaeger \

-p 16686:16686 \

-p 4317:4317 \

-p 4318:4318 \

jaegertracing/all-in-one:1.62

# Access http://localhost:16686 in your browser

Key features:

1. Trace Search

- Select Service → Filter by Operation → Duration range

- Tag search: http.status_code=500

2. Trace Timeline (Waterfall)

- Visualize start/end times of each Span

- Distinguish parallel from sequential processing

- View detailed attributes/events/logs for each Span

3. Service Dependency Graph

- Visualize call relationships between services

- Display call frequency and error rate

4. Compare

- Compare two Traces to analyze performance differences

Best Practices

1. Use Semantic Conventions

from opentelemetry.semconv.trace import SpanAttributes

# Use standard attributes (cross-vendor compatibility)

span.set_attribute(SpanAttributes.HTTP_METHOD, "POST")

span.set_attribute(SpanAttributes.HTTP_URL, "/api/orders")

span.set_attribute(SpanAttributes.HTTP_STATUS_CODE, 201)

span.set_attribute(SpanAttributes.DB_SYSTEM, "postgresql")

span.set_attribute(SpanAttributes.DB_STATEMENT, "SELECT * FROM orders")

2. Remove Sensitive Information

# Remove PII in the Collector

processors:

attributes:

actions:

- key: http.request.header.authorization

action: delete

- key: db.statement

action: hash # Replace SQL with hash

- key: user.email

action: delete

3. Error Recording

try:

result = external_api.call()

except Exception as e:

span.set_status(trace.StatusCode.ERROR, str(e))

span.record_exception(e)

# record_exception automatically includes stack trace

raise

Quiz

Q1. What is the relationship between Trace, Span, and Context?

A Trace represents the entire path of a request, a Span is a single unit of work within it, and Context is the mechanism that propagates Trace/Span information between services.

Q2. What is the format of W3C Trace Context's traceparent?

{version}-{traceId}-{spanId}-{flags} format. Example: 00-abc123...-span123...-01

Q3. What is the role of the OpenTelemetry Collector?

It handles the pipeline of Receive (ingestion) then Process (processing/filtering/sampling) then Export (transmission). It acts as an intermediate layer between applications and backends (Jaeger, Tempo, etc.).

Q4. What is the difference between Tail Sampling and Head Sampling?

Head Sampling decides whether to collect at the start of a Trace, while Tail Sampling decides after the Trace is complete with full information. Tail Sampling enables more precise control, such as collecting 100% of error Traces.

Q5. Why should you not set the sampling rate to 100% in production?

Trace data volume becomes very large, leading to increased storage costs, network load, and Collector overload. Typically 5-10% is appropriate.

Q6. What is the difference between record_exception() and set_status(ERROR)?

record_exception() records an exception event (including stack trace) on the Span, while set_status(ERROR) marks the Span's status as error. Typically both are used together.

Q7. Why use Semantic Conventions?

Using standardized attribute names allows consistent Trace analysis across different vendor backends (Jaeger, Datadog, Grafana, etc.).

Conclusion

OpenTelemetry has established itself as the Observability standard for distributed systems. Start quickly with auto-instrumentation, then add business context with manual instrumentation as needed. Using the OTel Collector as an intermediate layer enables flexible operations including sampling, filtering, and multi-backend transmission.