- Authors

- Name

- Overview

- Architecture Overview

- Environment Setup

- Docker Compose Configuration

- Loki Configuration

- Promtail Configuration

- Running and Verification

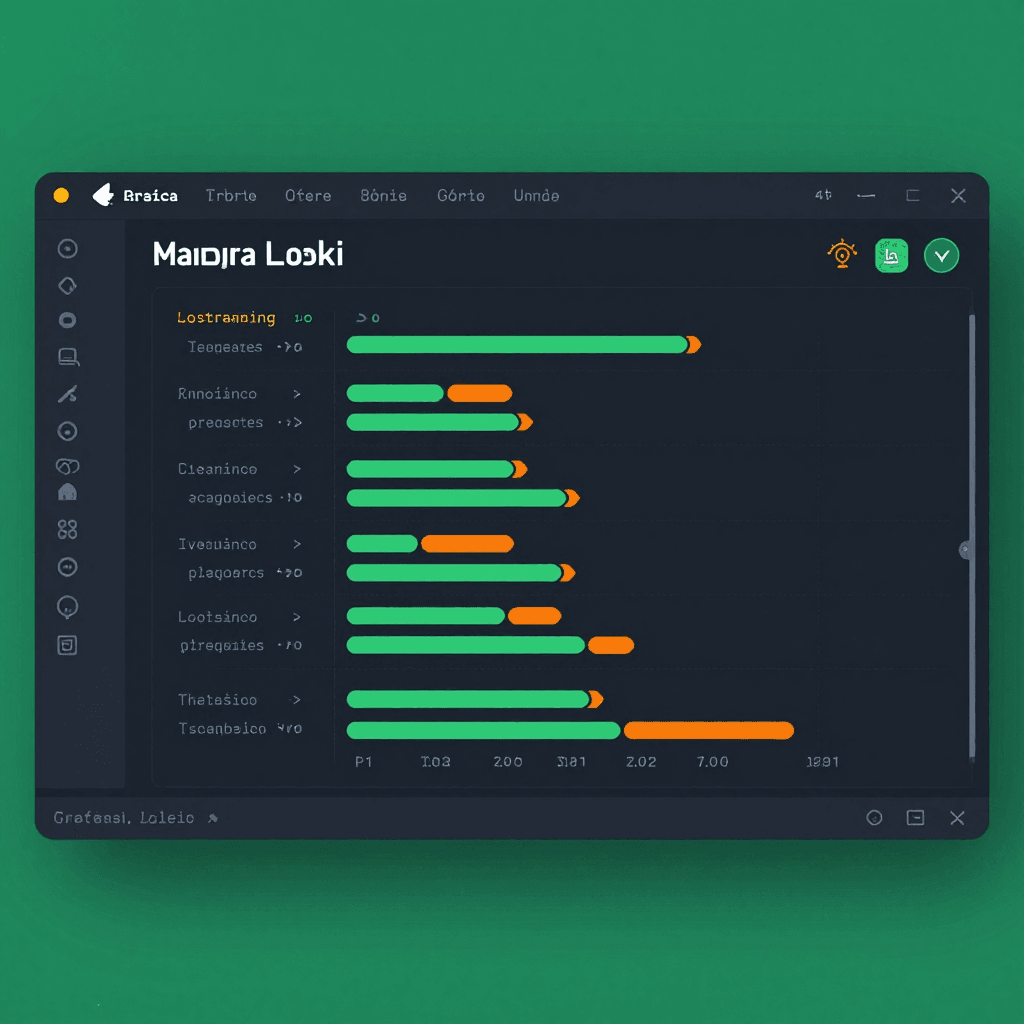

- Connecting Loki in Grafana

- Dashboard Configuration

- Alerting Configuration

- Production Operation Tips

- End-to-End Data Flow Summary

- Conclusion

- Quiz

Overview

In production environments, logs are essential for incident response and debugging. The ELK (Elasticsearch + Logstash + Kibana) stack has long been the standard, but Elasticsearch's high resource consumption and complex operational overhead have been persistent issues. Grafana Loki was created to solve these problems as a lightweight log aggregation system that performs only label-based indexing without indexing the log content itself, dramatically reducing storage costs and operational complexity.

In this article, we cover the entire process of building a Promtail to Loki to Grafana pipeline with Docker Compose, and configuring LogQL queries and alert rules.

Architecture Overview

The overall log pipeline flow is as follows:

graph LR

A[Application Logs] -->|tail| B[Promtail]

B -->|HTTP Push| C[Loki]

C -->|Store| D[Object Storage / Filesystem]

C -->|Query| E[Grafana]

E -->|Alert| F[Slack / Email]

The role of each component:

| Component | Role |

|---|---|

| Promtail | Agent that tails log files and sends them to Loki |

| Loki | Log storage. Label indexing + chunk storage |

| Grafana | Log visualization, dashboards, and alert configuration |

Environment Setup

Project Directory Structure

mkdir -p loki-stack/{config,data}

cd loki-stack

# Directory structure

# loki-stack/

# ├── docker-compose.yml

# ├── config/

# │ ├── loki-config.yml

# │ └── promtail-config.yml

# └── data/

Docker Compose Configuration

docker-compose.yml

version: '3.8'

services:

loki:

image: grafana/loki:3.3.2

container_name: loki

ports:

- '3100:3100'

volumes:

- ./config/loki-config.yml:/etc/loki/local-config.yaml

- loki-data:/loki

command: -config.file=/etc/loki/local-config.yaml

restart: unless-stopped

networks:

- loki-net

promtail:

image: grafana/promtail:3.3.2

container_name: promtail

volumes:

- ./config/promtail-config.yml:/etc/promtail/config.yml

- /var/log:/var/log:ro

- /var/lib/docker/containers:/var/lib/docker/containers:ro

command: -config.file=/etc/promtail/config.yml

depends_on:

- loki

restart: unless-stopped

networks:

- loki-net

grafana:

image: grafana/grafana:11.4.0

container_name: grafana

ports:

- '3000:3000'

environment:

- GF_SECURITY_ADMIN_PASSWORD=admin123

- GF_AUTH_ANONYMOUS_ENABLED=true

volumes:

- grafana-data:/var/lib/grafana

depends_on:

- loki

restart: unless-stopped

networks:

- loki-net

volumes:

loki-data:

grafana-data:

networks:

loki-net:

driver: bridge

Loki Configuration

config/loki-config.yml

auth_enabled: false

server:

http_listen_port: 3100

grpc_listen_port: 9096

log_level: info

common:

instance_addr: 127.0.0.1

path_prefix: /loki

storage:

filesystem:

chunks_directory: /loki/chunks

rules_directory: /loki/rules

replication_factor: 1

ring:

kvstore:

store: inmemory

schema_config:

configs:

- from: 2024-01-01

store: tsdb

object_store: filesystem

schema: v13

index:

prefix: index_

period: 24h

limits_config:

retention_period: 720h # 30-day retention

max_query_length: 721h

max_query_parallelism: 4

ingestion_rate_mb: 10

ingestion_burst_size_mb: 20

compactor:

working_directory: /loki/compactor

compaction_interval: 10m

retention_enabled: true

retention_delete_delay: 2h

delete_request_store: filesystem

Key configuration points:

schema: v13— Latest TSDB schema for improved query performanceretention_period: 720h— Automatic deletion after 30 daysauth_enabled: false— Single-tenant mode (for development/small-scale operations)

Promtail Configuration

config/promtail-config.yml

server:

http_listen_port: 9080

grpc_listen_port: 0

positions:

filename: /tmp/positions.yaml

clients:

- url: http://loki:3100/loki/api/v1/push

batchwait: 1s

batchsize: 1048576 # 1MB

scrape_configs:

# System log collection

- job_name: system

static_configs:

- targets:

- localhost

labels:

job: syslog

host: myserver

__path__: /var/log/syslog

# Docker container log collection

- job_name: docker

static_configs:

- targets:

- localhost

labels:

job: docker

__path__: /var/lib/docker/containers/**/*.log

pipeline_stages:

- docker: {}

- json:

expressions:

stream: stream

time: time

log: log

- labels:

stream:

- output:

source: log

# Nginx access logs

- job_name: nginx

static_configs:

- targets:

- localhost

labels:

job: nginx

type: access

__path__: /var/log/nginx/access.log

pipeline_stages:

- regex:

expression: '^(?P<remote_addr>[\w.]+) - (?P<remote_user>\S+) \[(?P<time_local>[^\]]+)\] "(?P<method>\w+) (?P<request_uri>\S+) \S+" (?P<status>\d+) (?P<body_bytes_sent>\d+)'

- labels:

method:

status:

- metrics:

http_requests_total:

type: Counter

description: 'Total HTTP requests'

match_all: true

action: inc

Pipeline Stages Detailed Flow

Visualizing Promtail's pipeline processing flow:

graph TD

A[Raw Log Line] --> B[docker stage]

B --> C[json stage - Field Extraction]

C --> D[labels stage - Label Assignment]

D --> E[output stage - Final Log]

E --> F[Loki Push]

G[Nginx Log Line] --> H[regex stage - Pattern Matching]

H --> I[labels stage - method, status]

I --> J[metrics stage - Counter Increment]

J --> F

Running and Verification

# Start the stack

docker compose up -d

# Check status

docker compose ps

# Check Loki status

curl -s http://localhost:3100/ready

# ready

# Check Promtail targets

curl -s http://localhost:9080/targets | jq '.[] | .labels'

# Check labels stored in Loki

curl -s http://localhost:3100/loki/api/v1/labels | jq

Connecting Loki in Grafana

1. Add Data Source

After accessing Grafana (http://localhost:3000):

- Connections then Data Sources then Add data source

- Select Loki

- URL:

http://loki:3100 - Click Save and Test

2. Basic LogQL Queries

Let's run various LogQL queries in the Explore menu:

# View all syslogs

{job="syslog"}

# Filter for ERROR keyword

{job="syslog"} |= "error"

# View only Nginx 5xx errors

{job="nginx", type="access"} | json | status >= 500

# Regex filter

{job="docker"} |~ "(?i)exception|panic|fatal"

# Error count in the last 1 hour (1-minute intervals)

count_over_time({job="syslog"} |= "error" [1m])

# Top 10 error patterns

{job="syslog"} |= "error"

| pattern `<_> error: <message>`

| topk(10, count_over_time({job="syslog"} |= "error" [1h]))

LogQL Operator Summary

| Operator | Description | Example |

|---|---|---|

|= | Contains string | {job="app"} |= "error" |

!= | Does not contain | {job="app"} != "debug" |

|~ | Regex match | {job="app"} |~ "err|warn" |

!~ | Regex not match | {job="app"} !~ "health" |

| json | JSON parsing | {job="app"} | json |

| logfmt | logfmt parsing | {job="app"} | logfmt |

Dashboard Configuration

Provisioning Dashboards with JSON Model

You can create a config/dashboards/logs-overview.json file for automatic provisioning:

{

"dashboard": {

"title": "Logs Overview",

"panels": [

{

"title": "Error Rate (1m)",

"type": "timeseries",

"targets": [

{

"expr": "sum(count_over_time({job=~\".+\"} |= \"error\" [1m]))",

"legendFormat": "errors/min"

}

],

"gridPos": { "x": 0, "y": 0, "w": 12, "h": 8 }

},

{

"title": "Log Volume by Job",

"type": "barchart",

"targets": [

{

"expr": "sum by (job) (count_over_time({job=~\".+\"} [5m]))",

"legendFormat": "{{job}}"

}

],

"gridPos": { "x": 12, "y": 0, "w": 12, "h": 8 }

},

{

"title": "Recent Errors",

"type": "logs",

"targets": [

{

"expr": "{job=~\".+\"} |= \"error\""

}

],

"gridPos": { "x": 0, "y": 8, "w": 24, "h": 10 }

}

]

}

}

Alerting Configuration

Let's use Grafana's Unified Alerting to send notifications when errors spike:

Contact Point Configuration (Slack)

# Grafana provisioning: config/alerting/contact-points.yml

apiVersion: 1

contactPoints:

- orgId: 1

name: slack-alerts

receivers:

- uid: slack-1

type: slack

settings:

url: 'https://hooks.slack.com/services/YOUR/WEBHOOK/URL'

title: '🚨 {{ .CommonLabels.alertname }}'

text: |

**Status:** {{ .Status }}

**Summary:** {{ .CommonAnnotations.summary }}

Creating Alert Rules

In the Grafana UI:

- Alerting then Alert Rules then New Alert Rule

- Query:

count_over_time({job="syslog"} |= "error" [5m]) > 50 - Evaluation interval: 1 minute

- Pending period: 5 minutes (to ignore temporary spikes)

- Contact Point:

slack-alerts

Production Operation Tips

1. Multi-Tenant Configuration

# loki-config.yml

auth_enabled: true

# Sending tenant ID from Promtail

clients:

- url: http://loki:3100/loki/api/v1/push

tenant_id: team-backend

2. Using S3-Compatible Object Storage

# loki-config.yml (production)

common:

storage:

s3:

endpoint: minio:9000

bucketnames: loki-chunks

access_key_id: ${MINIO_ACCESS_KEY}

secret_access_key: ${MINIO_SECRET_KEY}

insecure: true

s3forcepathstyle: true

3. Helm Deployment in Kubernetes

# Loki Stack Helm chart

helm repo add grafana https://grafana.github.io/helm-charts

helm repo update

helm install loki grafana/loki-stack \

--namespace observability \

--create-namespace \

--set grafana.enabled=true \

--set promtail.enabled=true \

--set loki.persistence.enabled=true \

--set loki.persistence.size=50Gi

4. Log Volume Control

# promtail-config.yml - Drop unnecessary logs

pipeline_stages:

- match:

selector: '{job="nginx"}'

stages:

- regex:

expression: '"(?P<method>\w+) (?P<uri>\S+)'

- drop:

expression: '^/health$'

source: uri

- drop:

expression: '^/metrics$'

source: uri

End-to-End Data Flow Summary

sequenceDiagram

participant App as Application

participant PT as Promtail

participant LK as Loki

participant S3 as Storage

participant GF as Grafana

participant User as Operator

App->>App: Write logs to /var/log/app.log

PT->>App: Tail log file

PT->>PT: Pipeline processing (parse, label, filter)

PT->>LK: HTTP POST /loki/api/v1/push

LK->>LK: Index labels + compress chunks

LK->>S3: Store chunks & index

User->>GF: Open Dashboard

GF->>LK: LogQL query

LK->>S3: Read chunks

LK->>GF: Return results

GF->>User: Render logs & charts

GF->>GF: Evaluate alert rules

GF-->>User: 🚨 Slack notification (if threshold exceeded)

Conclusion

The Grafana + Loki + Promtail stack enables building an effective log pipeline with significantly fewer resources compared to ELK. Here are the key advantages:

- Low resource usage: Storage and memory savings since log content is not indexed

- Native Grafana integration: Query metrics (Prometheus) and logs (Loki) from a single dashboard

- LogQL: Gentle learning curve with syntax similar to PromQL

- Horizontal scaling: Independent scaling of read/write paths with microservice architecture

For production environments, make sure to consider S3-compatible storage, multi-tenancy, and appropriate retention policies.

Quiz

Q1: What is the core reason Loki has lower storage costs compared to ELK?

Loki does not index the log content itself and only indexes labels. Elasticsearch performs full-text indexing, consuming much more storage and memory.

Q2: What is the role of Promtail's positions file?

It records the last read position (offset) for each log file. When Promtail restarts, it can resume reading from the previous position without duplicate sending or missing entries.

Q3: What is the difference between

|= and |~ in LogQL?|= checks for exact string containment, while |~ performs regex pattern matching. For example: |= "error" checks for the string "error", while |~ "err|warn" matches "err" or "warn".

Q4: What index store does Loki's schema v13 use?

It uses the TSDB (Time Series Database) store. Query performance and compression efficiency are significantly improved compared to the previous BoltDB versions.

Q5: What is the purpose of the

It discards log lines matching specific conditions instead of sending them to Loki. This filters out unnecessary logs such as health checks and metrics endpoints to reduce storage costs.drop stage in pipeline stages?

Q6: How does Promtail distinguish tenants in multi-tenant mode?

When tenant_id is specified in Promtail's clients configuration, it is sent to Loki via the X-Scope-OrgID HTTP header, isolating logs per tenant.

Q7: What is the role of the

It is a LogQL metric query that counts the number of log lines within a specified time range. For example: count_over_time function?count_over_time({(job = 'app')} |= "error" [5m]) returns the number of error logs in the last 5 minutes.

Q8: What is the relationship between Loki's retention_period and the compactor?

retention_period defines the log retention duration, and the compactor is responsible for finding and deleting expired chunks. The compactor's retention_enabled: true must be configured for retention to work.