- Authors

- Name

- Introduction

- What Is VPA?

- Installing VPA

- Basic VPA Configuration

- Enabling In-Place Pod Resize (Kubernetes 1.35+)

- Monitoring the Resize Process

- Production Best Practices

- Troubleshooting

- Practical Example: Applying VPA to a Java Application

- Conclusion

Introduction

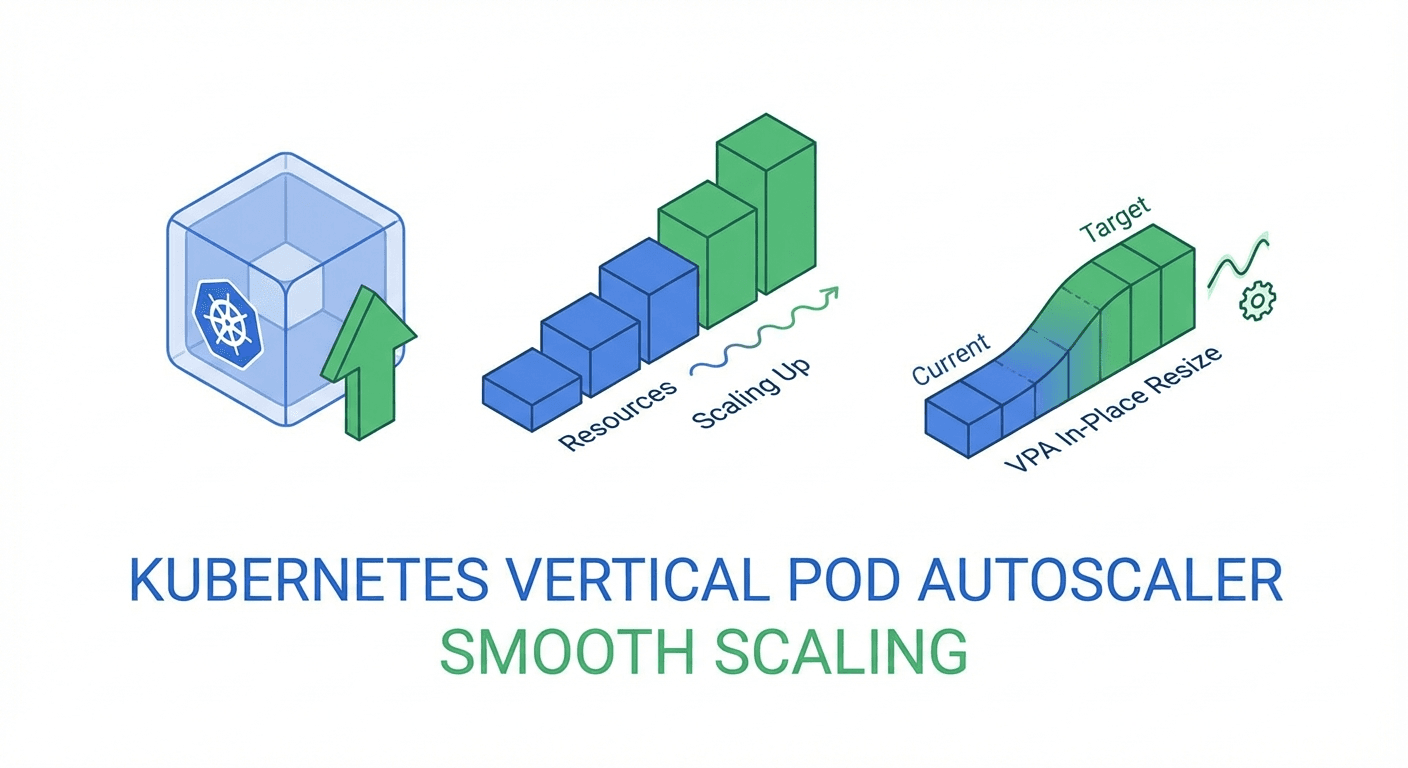

The Vertical Pod Autoscaler (VPA) for automatically adjusting workload resources in Kubernetes has long had one critical drawback: it required Pod restarts. However, with the GA promotion of In-Place Pod Resize in Kubernetes 1.35 (December 2025), it is now finally possible to adjust CPU and memory in real time without restarts.

This article walks through VPA fundamentals, In-Place Resize integration, and production deployment strategies step by step.

What Is VPA?

VPA (Vertical Pod Autoscaler) is a Kubernetes component that automatically adjusts CPU/memory requests and limits for Pods.

HPA vs VPA

| Aspect | HPA | VPA |

|---|---|---|

| Scaling direction | Horizontal (Pod count) | Vertical (resource sizing) |

| Best for | Stateless services | Stateful, single-instance workloads |

| Downtime | None | Legacy: restart needed, 1.35+: none |

VPA Components

VPA consists of three components:

# 1. Recommender: Analyzes resource usage and computes recommendations

# 2. Updater: Triggers Pod updates based on recommendations

# 3. Admission Controller: Applies recommendations to newly created Pods

Installing VPA

Installing with Helm

# Add VPA Helm chart

helm repo add fairwinds-stable https://charts.fairwinds.com/stable

helm repo update

# Install VPA

helm install vpa fairwinds-stable/vpa \

--namespace vpa-system \

--create-namespace \

--set recommender.enabled=true \

--set updater.enabled=true \

--set admissionController.enabled=true

# Verify installation

kubectl get pods -n vpa-system

Manual Installation (Official Repository)

git clone https://github.com/kubernetes/autoscaler.git

cd autoscaler/vertical-pod-autoscaler

# Install CRDs and components

./hack/vpa-up.sh

# Verify

kubectl get customresourcedefinitions | grep verticalpodautoscaler

Basic VPA Configuration

Creating a VPA Resource

# vpa-nginx.yaml

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: nginx-vpa

namespace: default

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: nginx-deployment

updatePolicy:

updateMode: 'Auto' # Off, Initial, Recreate, Auto

resourcePolicy:

containerPolicies:

- containerName: nginx

minAllowed:

cpu: '50m'

memory: '64Mi'

maxAllowed:

cpu: '2'

memory: '2Gi'

controlledResources: ['cpu', 'memory']

controlledValues: RequestsAndLimits

kubectl apply -f vpa-nginx.yaml

# Check VPA recommendations

kubectl get vpa nginx-vpa -o yaml

updateMode Options Comparison

# Off: Only provides recommendations, no automatic updates (for monitoring)

updateMode: "Off"

# Initial: Applies recommendations only at Pod creation time

updateMode: "Initial"

# Recreate: Recreates Pods when recommendations change

updateMode: "Recreate"

# Auto: Automatically selects the optimal method (includes In-Place on 1.35+)

updateMode: "Auto"

# InPlaceOrRecreate: Prefers In-Place, falls back to recreate if not possible (1.35+ Beta)

updateMode: "InPlaceOrRecreate"

Enabling In-Place Pod Resize (Kubernetes 1.35+)

Verifying the Feature Gate

Starting with Kubernetes 1.35, In-Place Pod Resize is enabled by default:

# Check cluster version

kubectl version --short

# Check feature gate status (default true on 1.35+)

kubectl get --raw /api/v1/nodes | jq '.items[0].status.features'

Configuring resizePolicy

Pods must specify resizePolicy to support In-Place Resize:

# deployment-with-resize.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: web-app

spec:

replicas: 3

selector:

matchLabels:

app: web-app

template:

metadata:

labels:

app: web-app

spec:

containers:

- name: web

image: nginx:1.27

resources:

requests:

cpu: '100m'

memory: '128Mi'

limits:

cpu: '500m'

memory: '512Mi'

resizePolicy:

- resourceName: cpu

restartPolicy: NotRequired # CPU adjusted without restart

- resourceName: memory

restartPolicy: NotRequired # Memory also adjusted without restart

kubectl apply -f deployment-with-resize.yaml

VPA + InPlaceOrRecreate Integration

# vpa-inplace.yaml

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: web-app-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: web-app

updatePolicy:

updateMode: 'InPlaceOrRecreate'

minReplicas: 2 # Minimum replicas to maintain

resourcePolicy:

containerPolicies:

- containerName: web

minAllowed:

cpu: '50m'

memory: '64Mi'

maxAllowed:

cpu: '4'

memory: '4Gi'

controlledResources: ['cpu', 'memory']

kubectl apply -f vpa-inplace.yaml

# Verify In-Place resize events

kubectl get pods -w

# STATUS remains Running while resources change

Monitoring the Resize Process

Verifying Pod Resource Changes

# Check current resources of a Pod

kubectl get pod web-app-xxx -o jsonpath='{.spec.containers[0].resources}'

# Check allocated resources (actually assigned)

kubectl get pod web-app-xxx -o jsonpath='{.status.containerStatuses[0].allocatedResources}'

# Check resize status

kubectl get pod web-app-xxx -o jsonpath='{.status.resize}'

# "InProgress", "Proposed", "Deferred", "" (complete)

VPA Recommendation Monitoring Script

#!/bin/bash

# watch-vpa.sh - Real-time VPA recommendation monitoring

VPA_NAME=${1:-web-app-vpa}

NAMESPACE=${2:-default}

while true; do

echo "=== $(date) ==="

kubectl get vpa $VPA_NAME -n $NAMESPACE -o jsonpath='

Target:

CPU: {.status.recommendation.containerRecommendations[0].target.cpu}

Memory: {.status.recommendation.containerRecommendations[0].target.memory}

Lower Bound:

CPU: {.status.recommendation.containerRecommendations[0].lowerBound.cpu}

Memory: {.status.recommendation.containerRecommendations[0].lowerBound.memory}

Upper Bound:

CPU: {.status.recommendation.containerRecommendations[0].upperBound.cpu}

Memory: {.status.recommendation.containerRecommendations[0].upperBound.memory}

'

echo ""

sleep 30

done

Prometheus Metrics

# Key VPA-related metrics

# kube_verticalpodautoscaler_status_recommendation_target

# vpa_recommender_recommendation_latency

# vpa_updater_evictions_total

# Pod CPU recommendation vs actual usage

rate(container_cpu_usage_seconds_total[5m])

# vs

kube_verticalpodautoscaler_status_recommendation_target{resource="cpu"}

Production Best Practices

1. Gradual Adoption Strategy

# Step 1: Observe recommendations in Off mode (1-2 weeks)

updateMode: "Off"

# Step 2: Apply only to new Pods with Initial mode

updateMode: "Initial"

# Step 3: Enable automatic adjustment with InPlaceOrRecreate

updateMode: "InPlaceOrRecreate"

2. Using with PDB (PodDisruptionBudget)

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: web-app-pdb

spec:

minAvailable: 2

selector:

matchLabels:

app: web-app

---

# VPA respects PDBs during Recreate fallback

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: web-app-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: web-app

updatePolicy:

updateMode: 'InPlaceOrRecreate'

minReplicas: 2

evictionRequirements:

- resources: ['cpu', 'memory']

changeRequirement: TargetHigherThanRequests

3. Using HPA and VPA Together

# HPA: CPU-based horizontal scaling

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: web-app-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: web-app

minReplicas: 2

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

---

# VPA: Vertical scaling for memory only (CPU managed by HPA)

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: web-app-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: web-app

updatePolicy:

updateMode: 'InPlaceOrRecreate'

resourcePolicy:

containerPolicies:

- containerName: web

controlledResources: ['memory']

minAllowed:

memory: '128Mi'

maxAllowed:

memory: '4Gi'

4. Visualizing VPA Recommendations with Goldilocks

# Install Goldilocks (VPA recommendation dashboard)

helm install goldilocks fairwinds-stable/goldilocks \

--namespace goldilocks \

--create-namespace

# Enable Goldilocks for a namespace

kubectl label namespace default goldilocks.fairwinds.com/enabled=true

# Access the dashboard

kubectl port-forward -n goldilocks svc/goldilocks-dashboard 8080:80

Troubleshooting

When In-Place Resize Is Not Working

# 1. Check Kubernetes version

kubectl version --short

# 1.35+ required

# 2. Check the node's Container Runtime

kubectl get nodes -o jsonpath='{.items[*].status.nodeInfo.containerRuntimeVersion}'

# containerd 1.7+ or CRI-O 1.29+ required

# 3. Check the Pod's resizePolicy

kubectl get pod <pod-name> -o jsonpath='{.spec.containers[0].resizePolicy}'

# 4. Check resize status

kubectl get pod <pod-name> -o jsonpath='{.status.resize}'

# "Deferred" -> Insufficient resources on node

# "Infeasible" -> Node does not support resize

# 5. Check events

kubectl describe pod <pod-name> | grep -A5 "Events"

When VPA Is Not Generating Recommendations

# Check VPA Recommender logs

kubectl logs -n vpa-system -l app=vpa-recommender --tail=50

# Verify metrics-server is working

kubectl top pods

# Check VPA status

kubectl describe vpa <vpa-name>

Practical Example: Applying VPA to a Java Application

apiVersion: apps/v1

kind: Deployment

metadata:

name: spring-boot-app

spec:

replicas: 3

selector:

matchLabels:

app: spring-boot

template:

metadata:

labels:

app: spring-boot

spec:

containers:

- name: app

image: myregistry/spring-boot-app:latest

resources:

requests:

cpu: '500m'

memory: '512Mi'

limits:

cpu: '2'

memory: '2Gi'

resizePolicy:

- resourceName: cpu

restartPolicy: NotRequired

- resourceName: memory

restartPolicy: RestartContainer # JVM needs heap reconfiguration

env:

- name: JAVA_OPTS

value: '-XX:+UseContainerSupport -XX:MaxRAMPercentage=75.0'

---

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: spring-boot-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: spring-boot-app

updatePolicy:

updateMode: 'InPlaceOrRecreate'

resourcePolicy:

containerPolicies:

- containerName: app

minAllowed:

cpu: '200m'

memory: '256Mi'

maxAllowed:

cpu: '4'

memory: '4Gi'

Conclusion

The GA of In-Place Pod Resize in Kubernetes 1.35 and VPA's InPlaceOrRecreate mode take resource optimization in production environments to the next level. Key takeaways:

- In-Place Resize enables CPU/memory adjustment without Pod restarts

- VPA + InPlaceOrRecreate delivers automated vertical scaling

- When using HPA and VPA together, separate managed resources (HPA=CPU, VPA=Memory)

- Adopt gradually: Off -> Initial -> InPlaceOrRecreate

Quiz (5 Questions)

Q1. What are the three components of VPA? Recommender, Updater, Admission Controller

Q2. Which Kubernetes version promoted In-Place Pod Resize to GA? Kubernetes 1.35 (December 2025)

Q3. Which VPA updateMode only provides recommendations without automatic updates? Off

Q4. What is the recommended resource separation when using HPA and VPA together? HPA handles CPU-based horizontal scaling, VPA handles memory-only vertical scaling

Q5. Why should the restartPolicy be set to RestartContainer for memory In-Place Resize in JVM-based applications? Because the JVM needs to reconfigure its heap memory, requiring a container restart