Split View: Slack Bot + LangChain RAG 챗봇 구축 실전 가이드 — 사내 문서 검색 봇 만들기

Slack Bot + LangChain RAG 챗봇 구축 실전 가이드 — 사내 문서 검색 봇 만들기

들어가며

"Confluence에서 배포 절차 문서 어디있지?" "Kubernetes 클러스터 접속 방법이 어떻게 되지?"

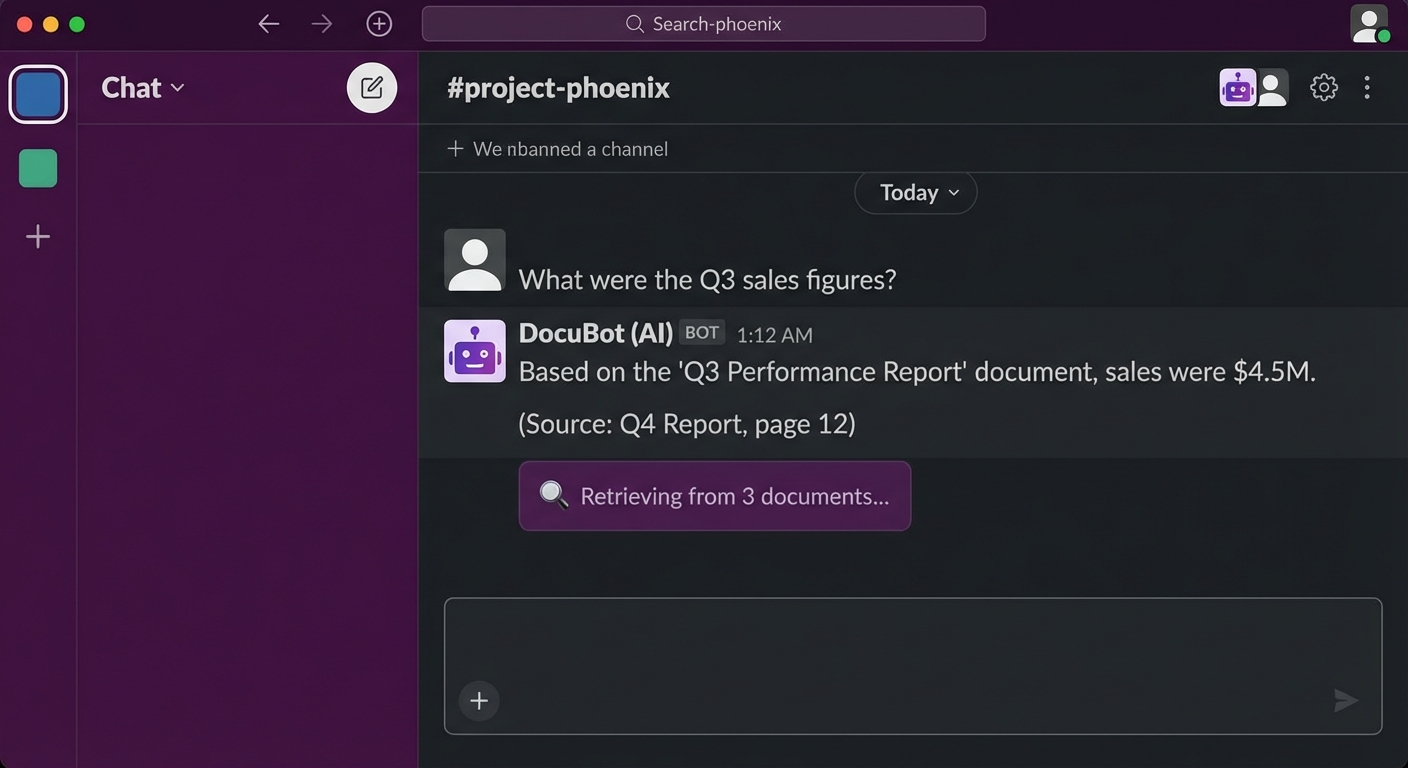

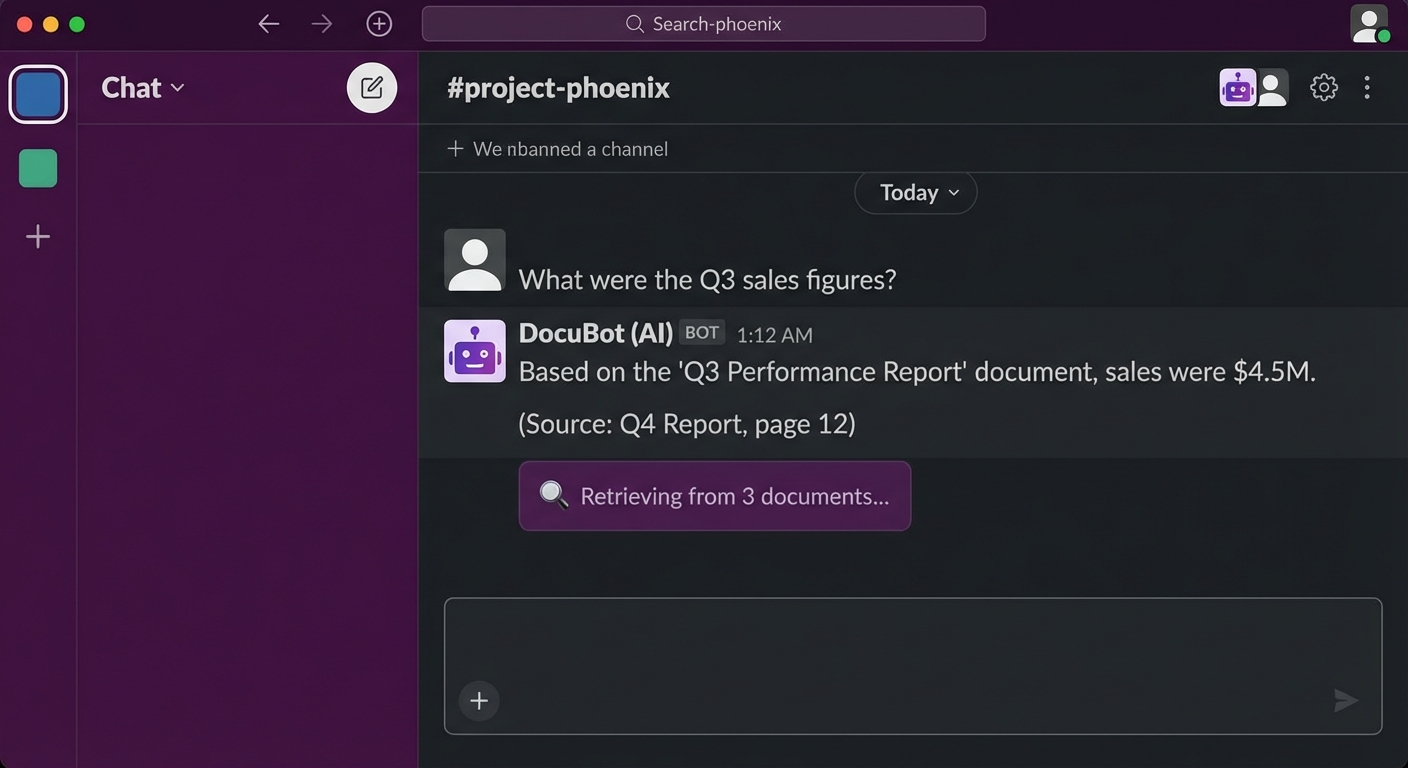

이런 질문에 매번 사람이 답하는 대신, 사내 문서를 검색하는 AI 챗봇을 만들어봅시다. LangChain + RAG(Retrieval-Augmented Generation) + Slack Bot 조합으로 실전 프로덕션 레벨의 챗봇을 구축합니다.

아키텍처 개요

# 인덱싱 파이프라인 (오프라인)

# 문서 → 청킹 → 임베딩 → 벡터 DB(ChromaDB)

# 질의 파이프라인 (온라인)

# Slack 메시지 → 임베딩 → 벡터 검색 → LLM 생성 → Slack 응답

프로젝트 설정

의존성 설치

mkdir slack-rag-bot && cd slack-rag-bot

# 가상환경

python -m venv .venv

source .venv/bin/activate

# 의존성

pip install \

langchain==0.2.16 \

langchain-openai==0.1.25 \

langchain-community==0.2.16 \

chromadb==0.5.3 \

slack-bolt==1.20.0 \

python-dotenv==1.0.1 \

unstructured==0.15.0 \

tiktoken==0.7.0

환경 변수

# .env

OPENAI_API_KEY=sk-xxx

SLACK_BOT_TOKEN=xoxb-xxx

SLACK_APP_TOKEN=xapp-xxx

SLACK_SIGNING_SECRET=xxx

CHROMA_PERSIST_DIR=./chroma_db

DOCS_DIR=./documents

프로젝트 구조

slack-rag-bot/

├── .env

├── main.py # Slack Bot 엔트리포인트

├── indexer.py # 문서 인덱싱

├── rag_chain.py # RAG 체인

├── config.py # 설정

├── documents/ # 사내 문서 (Markdown, PDF 등)

│ ├── deployment-guide.md

│ ├── k8s-access.md

│ └── onboarding.pdf

└── chroma_db/ # 벡터 DB 저장소

문서 인덱싱

문서 로드 및 청킹

# indexer.py

import os

from pathlib import Path

from langchain_community.document_loaders import (

DirectoryLoader,

UnstructuredMarkdownLoader,

PyPDFLoader,

TextLoader

)

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_openai import OpenAIEmbeddings

from langchain_community.vectorstores import Chroma

from dotenv import load_dotenv

load_dotenv()

def load_documents(docs_dir: str):

"""다양한 형식의 문서 로드"""

documents = []

# Markdown 파일

md_loader = DirectoryLoader(

docs_dir,

glob="**/*.md",

loader_cls=UnstructuredMarkdownLoader,

show_progress=True

)

documents.extend(md_loader.load())

# PDF 파일

pdf_loader = DirectoryLoader(

docs_dir,

glob="**/*.pdf",

loader_cls=PyPDFLoader,

show_progress=True

)

documents.extend(pdf_loader.load())

# 텍스트 파일

txt_loader = DirectoryLoader(

docs_dir,

glob="**/*.txt",

loader_cls=TextLoader,

show_progress=True

)

documents.extend(txt_loader.load())

print(f"총 {len(documents)}개 문서 로드됨")

return documents

def split_documents(documents):

"""문서를 청크로 분할"""

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=200,

length_function=len,

separators=["\n## ", "\n### ", "\n\n", "\n", " ", ""]

)

chunks = text_splitter.split_documents(documents)

print(f"총 {len(chunks)}개 청크 생성됨")

return chunks

def create_vectorstore(chunks, persist_dir: str):

"""벡터 DB 생성"""

embeddings = OpenAIEmbeddings(

model="text-embedding-3-small",

chunk_size=500

)

vectorstore = Chroma.from_documents(

documents=chunks,

embedding=embeddings,

persist_directory=persist_dir,

collection_metadata={"hnsw:space": "cosine"}

)

print(f"벡터 DB 생성 완료: {persist_dir}")

return vectorstore

def index_documents():

"""전체 인덱싱 파이프라인"""

docs_dir = os.getenv("DOCS_DIR", "./documents")

persist_dir = os.getenv("CHROMA_PERSIST_DIR", "./chroma_db")

# 로드 → 청킹 → 임베딩 → 저장

documents = load_documents(docs_dir)

chunks = split_documents(documents)

vectorstore = create_vectorstore(chunks, persist_dir)

return vectorstore

if __name__ == "__main__":

index_documents()

# 인덱싱 실행

python indexer.py

RAG 체인 구축

# rag_chain.py

import os

from langchain_openai import ChatOpenAI, OpenAIEmbeddings

from langchain_community.vectorstores import Chroma

from langchain.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnablePassthrough

from langchain_core.output_parsers import StrOutputParser

from dotenv import load_dotenv

load_dotenv()

class RAGChain:

def __init__(self):

self.embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

self.vectorstore = Chroma(

persist_directory=os.getenv("CHROMA_PERSIST_DIR", "./chroma_db"),

embedding_function=self.embeddings

)

self.retriever = self.vectorstore.as_retriever(

search_type="mmr", # Maximum Marginal Relevance

search_kwargs={

"k": 5,

"fetch_k": 20,

"lambda_mult": 0.7

}

)

self.llm = ChatOpenAI(

model="gpt-4o-mini",

temperature=0.1,

max_tokens=2000

)

self.chain = self._build_chain()

def _build_chain(self):

"""RAG 체인 구성"""

prompt = ChatPromptTemplate.from_messages([

("system", """당신은 사내 문서 기반 Q&A 어시스턴트입니다.

아래 컨텍스트를 기반으로 질문에 답변하세요.

규칙:

1. 컨텍스트에 있는 정보만 사용하세요.

2. 확실하지 않으면 "관련 문서를 찾지 못했습니다"라고 답하세요.

3. 답변에 출처 문서를 포함하세요.

4. 코드나 명령어가 있으면 코드 블록으로 포맷하세요.

컨텍스트:

{context}"""),

("human", "{question}")

])

def format_docs(docs):

formatted = []

for i, doc in enumerate(docs):

source = doc.metadata.get("source", "unknown")

formatted.append(f"[문서 {i+1}] ({source})\n{doc.page_content}")

return "\n\n---\n\n".join(formatted)

chain = (

{"context": self.retriever | format_docs, "question": RunnablePassthrough()}

| prompt

| self.llm

| StrOutputParser()

)

return chain

def ask(self, question: str) -> dict:

"""질문에 답변"""

# 관련 문서 검색

docs = self.retriever.invoke(question)

# LLM 생성

answer = self.chain.invoke(question)

# 출처 문서 정보

sources = list(set(

doc.metadata.get("source", "unknown") for doc in docs

))

return {

"answer": answer,

"sources": sources,

"num_docs": len(docs)

}

def refresh_index(self):

"""인덱스 새로고침"""

from indexer import index_documents

self.vectorstore = index_documents()

self.retriever = self.vectorstore.as_retriever(

search_type="mmr",

search_kwargs={"k": 5, "fetch_k": 20, "lambda_mult": 0.7}

)

self.chain = self._build_chain()

Slack Bot 연동

Slack App 설정

1. https://api.slack.com/apps 에서 새 앱 생성

2. Socket Mode 활성화

3. Bot Token Scopes 추가:

- app_mentions:read

- chat:write

- im:history

- im:read

- im:write

4. Event Subscriptions 활성화:

- app_mention

- message.im

5. 워크스페이스에 설치

Slack Bot 구현

# main.py

import os

import logging

from slack_bolt import App

from slack_bolt.adapter.socket_mode import SocketModeHandler

from rag_chain import RAGChain

from dotenv import load_dotenv

load_dotenv()

logging.basicConfig(level=logging.INFO)

# Slack App 초기화

app = App(token=os.environ["SLACK_BOT_TOKEN"])

# RAG Chain 초기화

rag = RAGChain()

@app.event("app_mention")

def handle_mention(event, say, client):

"""@멘션으로 질문받기"""

user = event["user"]

text = event["text"]

channel = event["channel"]

thread_ts = event.get("thread_ts", event["ts"])

# 봇 멘션 제거

question = text.split(">", 1)[-1].strip()

if not question:

say(

text="질문을 입력해주세요! 예: `@DocBot 배포 절차 알려줘`",

thread_ts=thread_ts

)

return

# 로딩 메시지

loading_msg = client.chat_postMessage(

channel=channel,

thread_ts=thread_ts,

text=":mag: 문서를 검색하고 있습니다..."

)

try:

# RAG 질의

result = rag.ask(question)

# 응답 포맷

response = f"<@{user}>\n\n{result['answer']}"

if result["sources"]:

sources_text = "\n".join(f"• `{s}`" for s in result["sources"])

response += f"\n\n:page_facing_up: *참고 문서:*\n{sources_text}"

# 로딩 메시지 업데이트

client.chat_update(

channel=channel,

ts=loading_msg["ts"],

text=response

)

except Exception as e:

logging.error(f"RAG error: {e}")

client.chat_update(

channel=channel,

ts=loading_msg["ts"],

text=f"죄송합니다, 오류가 발생했습니다: {str(e)}"

)

@app.event("message")

def handle_dm(event, say):

"""DM으로 질문받기"""

if event.get("channel_type") != "im":

return

if event.get("bot_id"):

return

question = event["text"]

try:

result = rag.ask(question)

response = result["answer"]

if result["sources"]:

sources_text = "\n".join(f"• `{s}`" for s in result["sources"])

response += f"\n\n:page_facing_up: *참고 문서:*\n{sources_text}"

say(text=response)

except Exception as e:

say(text=f"오류가 발생했습니다: {str(e)}")

@app.command("/docbot-reindex")

def handle_reindex(ack, say):

"""슬래시 커맨드로 인덱스 새로고침"""

ack()

say("인덱스를 새로고침합니다... :hourglass_flowing_sand:")

try:

rag.refresh_index()

say("인덱스 새로고침 완료! :white_check_mark:")

except Exception as e:

say(f"인덱스 새로고침 실패: {str(e)}")

if __name__ == "__main__":

handler = SocketModeHandler(app, os.environ["SLACK_APP_TOKEN"])

print("Slack RAG Bot started!")

handler.start()

Docker 배포

# Dockerfile

FROM python:3.11-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

# 인덱싱 후 봇 시작

CMD ["python", "main.py"]

# docker-compose.yml

version: '3.8'

services:

slack-rag-bot:

build: .

env_file: .env

volumes:

- ./documents:/app/documents

- ./chroma_db:/app/chroma_db

restart: unless-stopped

# 빌드 및 실행

docker compose up -d

# 로그 확인

docker compose logs -f

성능 최적화

임베딩 캐싱

from langchain.storage import LocalFileStore

from langchain.embeddings import CacheBackedEmbeddings

store = LocalFileStore("./embedding_cache")

cached_embeddings = CacheBackedEmbeddings.from_bytes_store(

underlying_embeddings=OpenAIEmbeddings(model="text-embedding-3-small"),

document_embedding_cache=store,

namespace="text-embedding-3-small"

)

대화 히스토리 (스레드 컨텍스트)

from langchain.memory import ConversationBufferWindowMemory

# 스레드별 메모리 관리

thread_memories = {}

def get_memory(thread_ts: str) -> ConversationBufferWindowMemory:

if thread_ts not in thread_memories:

thread_memories[thread_ts] = ConversationBufferWindowMemory(

k=5,

memory_key="chat_history",

return_messages=True

)

return thread_memories[thread_ts]

마무리

Slack RAG 챗봇 핵심 포인트:

- 문서 청킹: RecursiveCharacterTextSplitter로 의미 단위 분할

- 벡터 검색: MMR(Maximum Marginal Relevance)로 다양한 문서 검색

- 프롬프트: 출처 명시 + 불확실한 경우 솔직하게 답하도록 설계

- Slack 연동: Socket Mode + app_mention/DM 이벤트 처리

- 재인덱싱: 슬래시 커맨드로 문서 업데이트 반영

📝 퀴즈 (7문제)

Q1. RAG의 풀네임과 핵심 아이디어는? Retrieval-Augmented Generation. 외부 지식을 검색하여 LLM의 생성에 활용

Q2. RecursiveCharacterTextSplitter에서 chunk_overlap의 역할은? 청크 간 겹치는 부분을 두어 컨텍스트 손실을 방지

Q3. MMR(Maximum Marginal Relevance) 검색의 장점은? 유사도가 높은 문서만 반환하지 않고, 다양성도 고려하여 중복 줄임

Q4. Slack Socket Mode의 장점은? 별도의 공개 URL/인바운드 포트 없이 WebSocket으로 이벤트 수신 가능

Q5. 프롬프트에서 "컨텍스트에 있는 정보만 사용하세요"라고 명시하는 이유는? LLM의 할루시네이션을 방지하고 문서 기반 정확한 답변 유도

Q6. thread_ts를 사용하는 이유는? Slack 스레드 내에서 대화 컨텍스트를 유지하기 위해

Q7. 임베딩 캐싱의 효과는? 동일 문서에 대한 반복 임베딩 API 호출을 방지하여 비용과 시간 절감

Slack Bot + LangChain RAG Chatbot Practical Guide — Building an Internal Document Search Bot

- Introduction

- Architecture Overview

- Project Setup

- Document Indexing

- Building the RAG Chain

- Slack Bot Integration

- Docker Deployment

- Performance Optimization

- Conclusion

- Quiz

Introduction

"Where's the deployment procedure doc in Confluence?" "How do I access the Kubernetes cluster?"

Instead of having someone answer these questions every time, let's build an AI chatbot that searches internal documents. We'll create a production-level chatbot using the combination of LangChain + RAG (Retrieval-Augmented Generation) + Slack Bot.

Architecture Overview

# Indexing Pipeline (Offline)

# Documents → Chunking → Embedding → Vector DB (ChromaDB)

# Query Pipeline (Online)

# Slack Message → Embedding → Vector Search → LLM Generation → Slack Response

Project Setup

Installing Dependencies

mkdir slack-rag-bot && cd slack-rag-bot

# Virtual environment

python -m venv .venv

source .venv/bin/activate

# Dependencies

pip install \

langchain==0.2.16 \

langchain-openai==0.1.25 \

langchain-community==0.2.16 \

chromadb==0.5.3 \

slack-bolt==1.20.0 \

python-dotenv==1.0.1 \

unstructured==0.15.0 \

tiktoken==0.7.0

Environment Variables

# .env

OPENAI_API_KEY=sk-xxx

SLACK_BOT_TOKEN=xoxb-xxx

SLACK_APP_TOKEN=xapp-xxx

SLACK_SIGNING_SECRET=xxx

CHROMA_PERSIST_DIR=./chroma_db

DOCS_DIR=./documents

Project Structure

slack-rag-bot/

├── .env

├── main.py # Slack Bot entry point

├── indexer.py # Document indexing

├── rag_chain.py # RAG chain

├── config.py # Configuration

├── documents/ # Internal documents (Markdown, PDF, etc.)

│ ├── deployment-guide.md

│ ├── k8s-access.md

│ └── onboarding.pdf

└── chroma_db/ # Vector DB storage

Document Indexing

Loading and Chunking Documents

# indexer.py

import os

from pathlib import Path

from langchain_community.document_loaders import (

DirectoryLoader,

UnstructuredMarkdownLoader,

PyPDFLoader,

TextLoader

)

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_openai import OpenAIEmbeddings

from langchain_community.vectorstores import Chroma

from dotenv import load_dotenv

load_dotenv()

def load_documents(docs_dir: str):

"""Load documents in various formats"""

documents = []

# Markdown files

md_loader = DirectoryLoader(

docs_dir,

glob="**/*.md",

loader_cls=UnstructuredMarkdownLoader,

show_progress=True

)

documents.extend(md_loader.load())

# PDF files

pdf_loader = DirectoryLoader(

docs_dir,

glob="**/*.pdf",

loader_cls=PyPDFLoader,

show_progress=True

)

documents.extend(pdf_loader.load())

# Text files

txt_loader = DirectoryLoader(

docs_dir,

glob="**/*.txt",

loader_cls=TextLoader,

show_progress=True

)

documents.extend(txt_loader.load())

print(f"Total {len(documents)} documents loaded")

return documents

def split_documents(documents):

"""Split documents into chunks"""

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=200,

length_function=len,

separators=["\n## ", "\n### ", "\n\n", "\n", " ", ""]

)

chunks = text_splitter.split_documents(documents)

print(f"Total {len(chunks)} chunks created")

return chunks

def create_vectorstore(chunks, persist_dir: str):

"""Create vector DB"""

embeddings = OpenAIEmbeddings(

model="text-embedding-3-small",

chunk_size=500

)

vectorstore = Chroma.from_documents(

documents=chunks,

embedding=embeddings,

persist_directory=persist_dir,

collection_metadata={"hnsw:space": "cosine"}

)

print(f"Vector DB created at: {persist_dir}")

return vectorstore

def index_documents():

"""Full indexing pipeline"""

docs_dir = os.getenv("DOCS_DIR", "./documents")

persist_dir = os.getenv("CHROMA_PERSIST_DIR", "./chroma_db")

# Load → Chunk → Embed → Store

documents = load_documents(docs_dir)

chunks = split_documents(documents)

vectorstore = create_vectorstore(chunks, persist_dir)

return vectorstore

if __name__ == "__main__":

index_documents()

# Run indexing

python indexer.py

Building the RAG Chain

# rag_chain.py

import os

from langchain_openai import ChatOpenAI, OpenAIEmbeddings

from langchain_community.vectorstores import Chroma

from langchain.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnablePassthrough

from langchain_core.output_parsers import StrOutputParser

from dotenv import load_dotenv

load_dotenv()

class RAGChain:

def __init__(self):

self.embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

self.vectorstore = Chroma(

persist_directory=os.getenv("CHROMA_PERSIST_DIR", "./chroma_db"),

embedding_function=self.embeddings

)

self.retriever = self.vectorstore.as_retriever(

search_type="mmr", # Maximum Marginal Relevance

search_kwargs={

"k": 5,

"fetch_k": 20,

"lambda_mult": 0.7

}

)

self.llm = ChatOpenAI(

model="gpt-4o-mini",

temperature=0.1,

max_tokens=2000

)

self.chain = self._build_chain()

def _build_chain(self):

"""Build the RAG chain"""

prompt = ChatPromptTemplate.from_messages([

("system", """You are an internal document-based Q&A assistant.

Answer questions based on the context below.

Rules:

1. Use only the information in the context.

2. If unsure, answer "I could not find related documents."

3. Include source documents in your answer.

4. Format code or commands in code blocks.

Context:

{context}"""),

("human", "{question}")

])

def format_docs(docs):

formatted = []

for i, doc in enumerate(docs):

source = doc.metadata.get("source", "unknown")

formatted.append(f"[Document {i+1}] ({source})\n{doc.page_content}")

return "\n\n---\n\n".join(formatted)

chain = (

{"context": self.retriever | format_docs, "question": RunnablePassthrough()}

| prompt

| self.llm

| StrOutputParser()

)

return chain

def ask(self, question: str) -> dict:

"""Answer a question"""

# Search for relevant documents

docs = self.retriever.invoke(question)

# LLM generation

answer = self.chain.invoke(question)

# Source document information

sources = list(set(

doc.metadata.get("source", "unknown") for doc in docs

))

return {

"answer": answer,

"sources": sources,

"num_docs": len(docs)

}

def refresh_index(self):

"""Refresh the index"""

from indexer import index_documents

self.vectorstore = index_documents()

self.retriever = self.vectorstore.as_retriever(

search_type="mmr",

search_kwargs={"k": 5, "fetch_k": 20, "lambda_mult": 0.7}

)

self.chain = self._build_chain()

Slack Bot Integration

Slack App Configuration

1. Create a new app at https://api.slack.com/apps

2. Enable Socket Mode

3. Add Bot Token Scopes:

- app_mentions:read

- chat:write

- im:history

- im:read

- im:write

4. Enable Event Subscriptions:

- app_mention

- message.im

5. Install to workspace

Slack Bot Implementation

# main.py

import os

import logging

from slack_bolt import App

from slack_bolt.adapter.socket_mode import SocketModeHandler

from rag_chain import RAGChain

from dotenv import load_dotenv

load_dotenv()

logging.basicConfig(level=logging.INFO)

# Initialize Slack App

app = App(token=os.environ["SLACK_BOT_TOKEN"])

# Initialize RAG Chain

rag = RAGChain()

@app.event("app_mention")

def handle_mention(event, say, client):

"""Receive questions via @mention"""

user = event["user"]

text = event["text"]

channel = event["channel"]

thread_ts = event.get("thread_ts", event["ts"])

# Remove bot mention

question = text.split(">", 1)[-1].strip()

if not question:

say(

text="Please enter a question! Example: `@DocBot tell me about the deployment process`",

thread_ts=thread_ts

)

return

# Loading message

loading_msg = client.chat_postMessage(

channel=channel,

thread_ts=thread_ts,

text=":mag: Searching documents..."

)

try:

# RAG query

result = rag.ask(question)

# Format response

response = f"<@{user}>\n\n{result['answer']}"

if result["sources"]:

sources_text = "\n".join(f"• `{s}`" for s in result["sources"])

response += f"\n\n:page_facing_up: *Reference documents:*\n{sources_text}"

# Update loading message

client.chat_update(

channel=channel,

ts=loading_msg["ts"],

text=response

)

except Exception as e:

logging.error(f"RAG error: {e}")

client.chat_update(

channel=channel,

ts=loading_msg["ts"],

text=f"Sorry, an error occurred: {str(e)}"

)

@app.event("message")

def handle_dm(event, say):

"""Receive questions via DM"""

if event.get("channel_type") != "im":

return

if event.get("bot_id"):

return

question = event["text"]

try:

result = rag.ask(question)

response = result["answer"]

if result["sources"]:

sources_text = "\n".join(f"• `{s}`" for s in result["sources"])

response += f"\n\n:page_facing_up: *Reference documents:*\n{sources_text}"

say(text=response)

except Exception as e:

say(text=f"An error occurred: {str(e)}")

@app.command("/docbot-reindex")

def handle_reindex(ack, say):

"""Refresh index via slash command"""

ack()

say("Refreshing the index... :hourglass_flowing_sand:")

try:

rag.refresh_index()

say("Index refresh complete! :white_check_mark:")

except Exception as e:

say(f"Index refresh failed: {str(e)}")

if __name__ == "__main__":

handler = SocketModeHandler(app, os.environ["SLACK_APP_TOKEN"])

print("Slack RAG Bot started!")

handler.start()

Docker Deployment

# Dockerfile

FROM python:3.11-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

# Start bot after indexing

CMD ["python", "main.py"]

# docker-compose.yml

version: '3.8'

services:

slack-rag-bot:

build: .

env_file: .env

volumes:

- ./documents:/app/documents

- ./chroma_db:/app/chroma_db

restart: unless-stopped

# Build and run

docker compose up -d

# Check logs

docker compose logs -f

Performance Optimization

Embedding Caching

from langchain.storage import LocalFileStore

from langchain.embeddings import CacheBackedEmbeddings

store = LocalFileStore("./embedding_cache")

cached_embeddings = CacheBackedEmbeddings.from_bytes_store(

underlying_embeddings=OpenAIEmbeddings(model="text-embedding-3-small"),

document_embedding_cache=store,

namespace="text-embedding-3-small"

)

Conversation History (Thread Context)

from langchain.memory import ConversationBufferWindowMemory

# Per-thread memory management

thread_memories = {}

def get_memory(thread_ts: str) -> ConversationBufferWindowMemory:

if thread_ts not in thread_memories:

thread_memories[thread_ts] = ConversationBufferWindowMemory(

k=5,

memory_key="chat_history",

return_messages=True

)

return thread_memories[thread_ts]

Conclusion

Key points for a Slack RAG chatbot:

- Document chunking: Semantic-unit splitting with RecursiveCharacterTextSplitter

- Vector search: Diverse document retrieval with MMR (Maximum Marginal Relevance)

- Prompting: Design to cite sources and answer honestly when uncertain

- Slack integration: Socket Mode + app_mention/DM event handling

- Re-indexing: Reflect document updates via slash command

📝 Quiz (7 Questions)

Q1. What is the full name and core idea of RAG? Retrieval-Augmented Generation. It retrieves external knowledge and uses it to augment LLM generation.

Q2. What is the role of chunk_overlap in RecursiveCharacterTextSplitter? It creates overlapping sections between chunks to prevent context loss.

Q3. What is the advantage of MMR (Maximum Marginal Relevance) search? Instead of returning only the most similar documents, it also considers diversity to reduce redundancy.

Q4. What is the advantage of Slack Socket Mode? It can receive events via WebSocket without a public URL or inbound port.

Q5. Why specify "use only the information in the context" in the prompt? To prevent LLM hallucination and guide accurate document-based answers.

Q6. Why use thread_ts? To maintain conversation context within a Slack thread.

Q7. What is the effect of embedding caching? It prevents redundant embedding API calls for the same documents, saving cost and time.

Quiz

Q1: What is the main topic covered in "Slack Bot + LangChain RAG Chatbot Practical Guide —

Building an Internal Document Search Bot"?

Build a Slack chatbot that searches internal documents using LangChain and RAG. Covers document embedding, vector DB, prompt engineering, and Slack Bolt integration with complete code.

Q2: What are the key steps for Project Setup?

Installing Dependencies Environment Variables Project Structure

Q3: Explain the core concept of Document Indexing.

Loading and Chunking Documents

Q4: What are the key aspects of Slack Bot Integration?

Slack App Configuration Slack Bot Implementation

Q5: How can Performance Optimization be achieved effectively?

Embedding Caching Conversation History (Thread Context)