- Authors

- Name

- Youngju Kim

- @fjvbn20031

Background of YARN's Creation

When Hadoop was at version 1, MapReduce was the only means available for processing and transforming data. The Job Tracker, a component of MapReduce that was also responsible for resource management and scheduling, became overloaded and turned into a bottleneck. In Hadoop version 2, YARN was introduced to solve this problem. It separated the responsibilities of resource management and scheduling, enabling a broader range of applications to run on Hadoop than before.

YARN Architecture

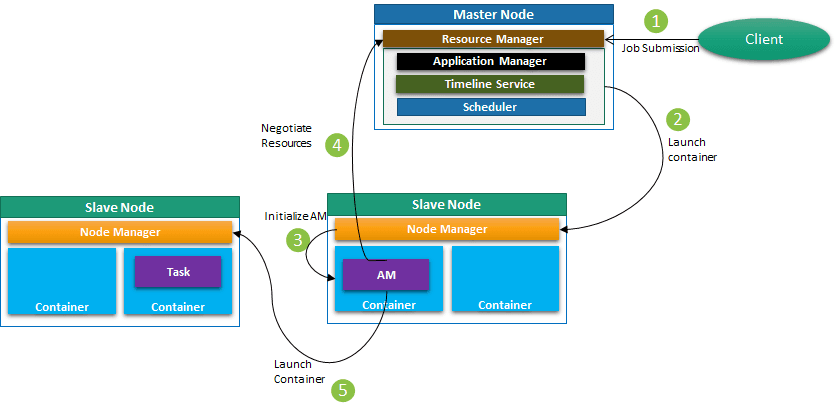

A client submits a job to the Resource Manager, and the RM assigns an Application Master to one of the Node Managers. As the name suggests, the Application Master is a critical process that exists once per application and oversees its entire lifecycle, running within a container. The AM requests the necessary resource allocation from the Resource Manager and receives logical resources (CPU, Memory) managed as bundles called Containers to run the job.

Components of YARN

ResourceManager (RM): Cluster-level resource management: Centrally manages resources across the entire Hadoop cluster. Scheduler: Acts as a scheduler that allocates resources needed by applications. This scheduler is pluggable, allowing operators to choose policy plugins. Commonly used schedulers include Capacity, Fair, and SLA schedulers. It does not monitor application status, nor does it handle restarts for application failures or hardware failures. It only performs functions based on resource requirements. Application Manager: Manages the lifecycle of applications, handling tasks such as application submission, execution, and termination. It accepts job submissions, selects the first container where the Application Master will run, and is responsible for restarting the Application Master container in case of failure.

NodeManager (NM): Runs on each node and monitors the resource usage and status of that node. Starts or stops containers based on instructions from the ResourceManager.

ApplicationMaster (AM): Instantiated once per application, it manages the execution of that application. Requests resources from the ResourceManager and directs the NodeManagers to execute actual tasks. Monitors the progress of the application and adjusts resources as needed.

Container: A bundle of resources (CPU=v-core, Memory=v-mem, etc.) needed to run an application. Allocated by the ResourceManager and managed by the NodeManager.

Is the Relationship Between YARN and HDFS Dependent?

Since most YARN deployments run on top of HDFS and YARN is included in the Hadoop binary, one might think there is a dependency between them, but this is a misconception. While YARN and HDFS are certainly the best-matched tools, HDFS handles the storage layer and YARN handles resource management -- they are independent components.

YARN Job Submission and Execution Flow

The client submits a job to the Resource Manager. (At this point, the client must obtain an Application ID by calling createNewApplication from ClientRMService, and receives a GetNewApplicationResponse from the RM containing information about the maximum resources that can be allocated from the cluster. Based on this information, the client proceeds with submitApplication.)

Once the Application ID, Application Name, Queue Name, Application Priority, and ContainerLaunchContext information are determined, the Resource Manager's Application Manager launches the Application Master on the NodeManager selected through the internal scheduler of the RM via AMLauncher.

The Node Manager receives the ContainerLaunchContext from the RM and uses this information to launch the AM (Application Master). The ContainerLaunchContext contains the following information:

- ContainerId

- Resource: Resources allocated to the container

- User: The user the container is assigned to

- Security token: (Only when Security is enabled)

- LoadResource: Binaries needed to run the container (e.g., jar, shared objects, etc.)

- Environment Variables

- Commands: Commands needed to start the container

When the Application Master starts successfully, an RPC port and Tracking UI are assigned, and it registers with the Resource Manager. This registration is necessary so the AM can request resources from the Resource Manager. ApplicationMasterProtocol is the interface required between the ResourceManager and ApplicationMaster, with implementations such as AMRMClient and AMRMClientAsync.

The AM runs the containers -- resources requested from and allocated by the RM -- on the Node Managers to execute the job.