- Published on

Apache Spark in Production: Performance Tuning, Shuffle, Skew, AQE, and Streaming Operations

- Authors

- Name

- Youngju Kim

- @fjvbn20031

- Introduction

- The execution model that actually matters

- Shuffle, partitioning, and skew decide most outcomes

- AQE, join strategy, and cache discipline

- Structured Streaming operations

- A practical tuning workflow

- Closing thoughts

- References

Introduction

Apache Spark is fast when the data layout, join strategy, and execution plan are aligned with the workload. It becomes expensive when teams treat it as a generic black box and respond to every slowdown by adding more memory or more nodes.

In production, most expensive Spark jobs fail in predictable ways:

- too many small files increase scan overhead

- skewed keys create a few very slow tasks that dominate wall-clock time

- a large shuffle join appears where a broadcast join should have been used

- cache is applied broadly and turns into memory pressure plus spill

- Structured Streaming jobs grow state forever because watermark and checkpoint rules are weak

This guide focuses on the Spark behaviors that matter most in production: shuffle, partitioning, skew, adaptive execution, join planning, cache discipline, and streaming operations.

The execution model that actually matters

For performance work, Spark APIs are less important than the execution model:

- The driver builds the DAG and schedules work.

- Executors run tasks.

- Stage boundaries usually appear where shuffle is introduced.

- The number and quality of partitions largely define the degree of useful parallelism.

That means tuning begins with a few direct questions:

- Which stage is the slowest

- How large are shuffle read and shuffle write volumes

- Are there skewed tasks

- Is the input file layout producing too many or too few partitions

- Which operator is really expensive: join, aggregation, sort, or stateful streaming

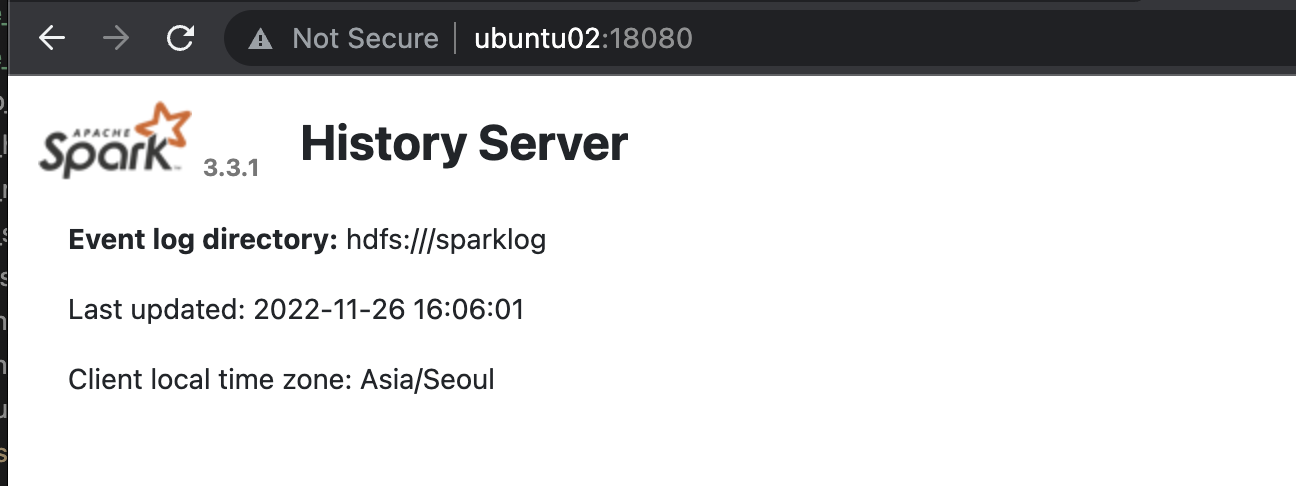

In practice, Spark UI is the first performance tool. The SQL tab, stage metrics, task distribution, and spill behavior usually reveal more than cluster-level averages.

Shuffle, partitioning, and skew decide most outcomes

Why shuffle is expensive

Shuffle combines network transfer, disk I/O, serialization, and often sorting. Cost rises sharply when a workload includes:

- wide aggregations and large sorts

- large joins on poorly distributed keys

- too many small partitions

- hot keys that concentrate data on a subset of tasks

Because shuffle is where cost compounds, most meaningful Spark tuning is about preventing unnecessary data movement or making it more balanced.

Partition count should be deliberate

Too few partitions reduce parallelism. Too many partitions add scheduler overhead, tiny output files, and extra bookkeeping. A fixed cluster-wide number is rarely correct for every job.

The more useful operating rule is:

- size partitions based on data volume and target task duration

- inspect resulting output file counts, not only task counts

- do not leave

spark.sql.shuffle.partitionsat the default because it happened to work once - use AQE, but do not assume AQE can compensate for a badly designed source layout

spark.conf.set("spark.sql.adaptive.enabled", "true")

spark.conf.set("spark.sql.adaptive.coalescePartitions.enabled", "true")

spark.conf.set("spark.sql.shuffle.partitions", "400")

Skew hides behind averages

Average stage time can look acceptable while a handful of tasks run several times longer than the median. That long tail becomes the real bottleneck.

The common responses are:

- identify hot keys

- salt or pre-aggregate before the heavy join or aggregation

- isolate small dimensions that can be broadcast

- enable AQE skew handling

- revisit the upstream data model if key concentration is structural

Skew is often not just a Spark configuration issue. It is frequently a data modeling issue showing up at runtime.

AQE, join strategy, and cache discipline

AQE is a production guardrail, not a magic switch

Adaptive Query Execution is one of the most valuable Spark 3 capabilities. It lets Spark change partitioning and join behavior based on runtime statistics, which is especially helpful when daily data distribution changes.

Still, AQE is not enough by itself:

- statistics may arrive after an expensive operation is already underway

- weak filter pushdown can keep the workload unnecessarily large

- poor source partitioning can remain expensive even after adaptive changes

AQE should be enabled by default, but paired with execution-plan review and source-layout discipline.

Join strategy needs explicit review

The safest operating sequence is:

- Determine whether the smaller table can be broadcast.

- Check key distribution.

- Confirm whether sort-merge join is actually required.

- Validate partition pruning and predicate pushdown.

- Inspect the physical plan to verify that Spark selected the intended strategy.

import org.apache.spark.sql.functions.broadcast

val result = factDf.join(broadcast(dimDf), Seq("customer_id"), "left")

Broadcast joins are not always correct, but uninspected joins are a common cause of unnecessary shuffle cost.

Cache only when recomputation is more expensive than memory pressure

Cache is valuable when the same expensive intermediate dataset is reused multiple times. It is harmful when used on one-time scans or when executor memory is already tight.

Before caching, check:

- how many times the dataset is reused

- how expensive the original scan or transformation is

- current memory pressure and spill behavior

- when the cached data can be released

In many production pipelines, the real optimization is not calling cache(). It is calling unpersist() at the right moment.

Structured Streaming operations

Structured Streaming looks simple because the API resembles batch Spark. Operationally, it requires much stricter contracts.

Never grow state without a clear watermark strategy

Stateful aggregation and stream-stream joins can expand indefinitely if watermark rules are vague or missing.

val aggregated =

events

.withWatermark("event_time", "10 minutes")

.groupBy(window($"event_time", "5 minutes"), $"user_id")

.count()

The core checklist is:

- separate event time from processing time

- choose watermark delay based on actual SLA and lateness patterns

- protect checkpoint storage durability and permissions

- confirm sink idempotency and output mode behavior

- watch late-data ratio and state-store size

Streaming reliability is an operating contract

A streaming system is fragile if the team has not documented:

- where checkpoints live

- which configuration changes are safe across redeployments

- whether duplicate outputs are acceptable

- how backfills are handled

- how schema evolution is introduced

Without these rules, the same pipeline can behave differently every time there is a recovery event.

A practical tuning workflow

The most effective tuning process is simple:

- Pick the single slowest stage in Spark UI.

- Inspect shuffle size, spill, and task imbalance.

- Review the physical plan for joins and partition layout.

- Check file layout and pushdown on the source side.

- Change one thing at a time: AQE, broadcast, repartition, pre-aggregation, or cache strategy.

- Measure both runtime and cost.

Spark tuning becomes difficult when teams change too many settings at once and lose the ability to attribute improvements.

Closing thoughts

Good Spark operations are not about permanently increasing cluster size. They are about reducing unnecessary data movement, controlling skew, making joins intentional, and keeping streaming state bounded.

The four habits that matter most are:

- use Spark UI and physical plans for every slow job

- design partition counts and output file counts deliberately

- treat AQE and broadcast strategy as baseline guardrails

- document watermark, state, and checkpoint rules for streaming jobs

Spark is powerful, but production performance comes from operating discipline rather than defaults.